Thoughts on changes to TPS behavior in vSphere

This week VMware released a blog post and several knowledgebase articles about Transparent Page Sharing (TPS). In the next patch for ESXi, TPS will be disabled by default between virtual machines. TPS is the technology that allows virtual machines running on the same ESXi host to share identical memory pages, allowing ESXi to overcommit memory. This technology has been part of ESX/ESXi for years and is one of the (many) differentiating factors between it and other hypervisors.

VMware made this decision due to independent research that showed that in a highly controlled environment the contents of those shared pages could be read. Though VMware admits it’s highly unlikely that this would be possible in a production environment, being security conscious they made the decision to disable the feature by default. It can be re-enabled if your environment could still benefit (see KB articles above for instructions).

There have been a number of blog posts on this topic already and I don’t want to add to the noise, but I did want to add my perspective. I’ve been working with ESX/ESXi since 2002 so I’ve seen how TPS has been used over the years and can hopefully add some perspective.

Will This Affect Me?

That’s likely the key question you’re asking yourself. One of the things I’ve seen over the years is that many folks don’t really understand how TPS works with modern processors. Using modern processors, ESXi uses large memory pages (2MB) to back all guest pages (even if the guest OS itself doesn’t support large pages) in order to improve performance. ESXi doesn’t attempt to share large pages, so in the vast majority of cases TPS is actually not used. Only when an ESXi host comes under extreme memory pressure does it break large pages into small pages and begin to share them.

I’ll state that again to be clear – unless you consistently over-commit memory on your ESXi hosts, TPS is not actually in use. So does this really affect you? Chances are it probably doesn’t unless you rely heavily on overcommitting memory.

What About Zero Pages?

There’s one area where TPS kicks in regardless of whether the ESXi host is over-committed on memory or not: zero pages. When a VM powers on, most modern operating systems will zero out all memory pages of the allocated memory (meaning write zeros to all memory pages). On ESXi, TPS kicks in and shares those pages immediately right after the VM powers on. It doesn’t matter if memory is over-committed, and it doesn’t wait for the normal 60 minute cycle where TPS scans for identical pages. TPS kicks in and shares the zero pages immediately.

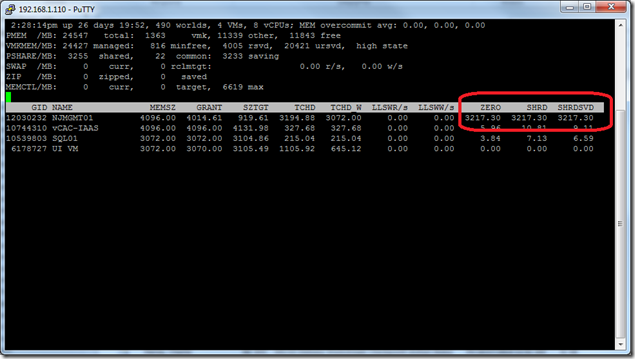

As an example, see this screenshot from esxtop and pay close attention to the ZERO and SHRD columns. I just powered on NJMGMT01 and you can see in the highlighted columns that 3.2GB of its allocated 4.0GB are currently being shared (see the SHRD column). Of those pages, 100% of them are zero pages (see the ZERO column).

If that host was already overcommited on memory, sharing the zero pages and having them slowly get utilized by the OS could give time for ballooning to kick in and reclaim memory from other guests. Without TPS in this scenario, it is likely that ESXi would be forced to swap/compress memory before ballooning can kick in to reclaim unused memory from other guests.

Does this mean there’s a real risk to disabling TPS? Below I go through a number of scenarios where it may or may not make sense to re-enable TPS, but I don’t think zero pages (explicitly) is one of them. If you already make use of memory over-commitment then you’ll want to re-enable it either way. If not, as long as you properly plan your environment then sharing zero pages shouldn’t matter except possibly in the event of an ESXi host failure.

Should I re-enable TPS?

The next question you might be asking yourself is: should I re-enable TPS once it’s disabled by default in the next update? As always it comes down to your individual requirements so there is no “one size fits all” answer. Let’s look at some scenarios to better illustrate the situation.

Production workloads

I’ve worked with a lot of customers over to years to help virtualize business critical applications, and I’ve seen one thing remain consistent: for business critical applications (and most production workloads in general), customers do not overcommit memory. For the most critical workloads, customers will use memory reservations to ensure those workloads always have access to the memory they need.

These days, it’s not uncommon to see ESXi hosts with 256GB to 1TB of RAM so overcommitting memory is an unlikely scenario. I’d say in most cases it’s unnecessary to re-enable TPS for production workloads.

VDI

If there’s a scenario where it seems like TPS was created just to solve a specific problem, it would be VDI. Consider a typical VDI environment using non-persistent desktops – there could be 50-100 identical virtual desktops running on each ESXi host. The potential memory savings in this scenario is huge, especially since having high consolidation ratios can help drive down the cost of VDI.

For VDI, I think it makes a lot of sense to re-enable TPS to take advantage of the huge memory savings potential.

Dev/Test

In development environments, you’ll often want to increase consolidation ratios since performance is not typically the most important factor. Development environments also have the potential to have many similar workloads (web servers, database servers, etc.) as new versions of applications are tested.

For dev/test environments, I think it makes sense to re-enable TPS.

Home Labs

I think this one is easy – most of us don’t have large budgets to support our home labs so memory is tight. In my lab I frequently overcommit memory, so I will be re-enabling TPS after the update.

Everything else?

I only covered a small portion of the potential situations that could be out there. You’ll need to make a decision based on your own individual requirements. Think about what’s most important – maximum performance, consolidation ratios, security, etc.?

Remember – due to the way TPS works with large pages, in most scenarios you’re probably not even using it today. In the event that you need to overcommit memory – such as during an ESXi host failure or DR scenario – ESXi has other methods of memory reclamation such as ballooning and swapping. Swapping is generally the last resort and results in significant performance reduction, but you can offset that a bit by using an SSD and leveraging the swap to host cache feature of ESXi.

I admit I used a lot of words to basically say, “Don’t worry about it – this probably doesn’t affect you anyway” but I wanted to lay out the common scenarios I see at customers. I believe VMware made the right move here disabling TPS out of an abundance of caution even if the scenario to exploit this is unlikely to occur in production.

Are you re-enabling TPS? Feel free to leave a comment on why or why not.